|

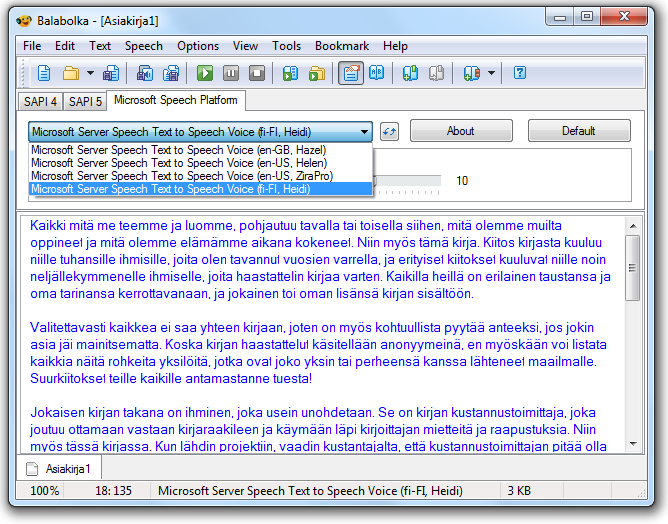

Balabolka is all-in-one text to speech application which supports previous versions of SAPI-Microsoft Speech APIs to narrate the inserted text. Contrasting. Speech Recognition remains more powerful than Cortana. It drives speech to text and voice control. This article will show you what Speech Recognition can do, how to. For many people, having their typed words read aloud is a necessity. If you fall into this category, Ultra Hal Text-to-Speech Reader offers a simple program to. In continuation of a previous contribution Text to Speech in WPF, here is a small sample that will recognize the speech and show the resultant text. Speech recognition - Wikipedia. Speech recognition (SR) is the inter- disciplinary sub- field of computational linguistics that develops methodologies and technologies that enables the recognition and translation of spoken language into text by computers. It is also known as . It incorporates knowledge and research in the linguistics, computer science, and electrical engineering fields. Some SR systems use . The system analyzes the person's specific voice and uses it to fine- tune the recognition of that person's speech, resulting in increased accuracy. Systems that do not use training are called . Systems that use training are called . Recognizing the speaker can simplify the task of translating speech in systems that have been trained on a specific person's voice or it can be used to authenticate or verify the identity of a speaker as part of a security process. From the technology perspective, speech recognition has a long history with several waves of major innovations. In this article I will talk once more about Windows Speech Recognition and how to benefit from all its advanced configuration options. I will show you how to create. E-triloquist: a PC-based communication aid for a speech impaired person. It serves as an electronic voice for those who can't speak on their own. Alive Text to Speech: Alive Text to Speech is a text-to-speech software to read text in any application, and convert text to MP3, WAV, OGG or VOX files.

Most recently, the field has benefited from advances in deep learning and big data. The advances are evidenced not only by the surge of academic papers published in the field, but more importantly by the worldwide industry adoption of a variety of deep learning methods in designing and deploying speech recognition systems. These speech industry players include Google, Microsoft, IBM, Baidu, Apple, Amazon, Nuance, Sound. Hound, Ifly. Tek, CDAC many of which have publicized the core technology in their speech recognition systems as being based on deep learning. History. Their system worked by locating the formants in the power spectrum of each utterance. Flanagan took over. Raj Reddy was the first person to take on continuous speech recognition as a graduate student at Stanford University in the late 1. Here is a complete example using C# and System.Speech for converting from speech to text. The code can be divided into 2 main parts: configuring the. Text to Speech. In addition to Locabulary Lite, another great text-to-speech program available for the iPhone/iPod and the iPad is "Speak it! Previous systems required the users to make a pause after each word. Reddy's system was designed to issue spoken commands for the game of chess. Also around this time Soviet researchers invented the dynamic time warping (DTW) algorithm and used it to create a recognizer capable of operating on a 2. Although DTW would be superseded by later algorithms, the technique of dividing the signal into frames would carry on. Achieving speaker independence was a major unsolved goal of researchers during this time period. In 1. 97. 1, DARPA funded five years of speech recognition research through its Speech Understanding Research program with ambitious end goals including a minimum vocabulary size of 1,0. BBN, IBM, Carnegie Mellon and Stanford Research Institute all participated in the program. This disappointment led to DARPA not continuing the funding. At CMU, Raj Reddy's students James Baker and Janet Baker began using the Hidden Markov Model (HMM) for speech recognition. Katz introduced the back- off model in 1. During the same time, also CSELT was using HMM (the diphonies were studied since 1. Italian. At the end of the DARPA program in 1. PDP- 1. 0 with 4 MB ram. As the technology advanced and computers got faster, researchers began tackling harder problems such as larger vocabularies, speaker independence, noisy environments and conversational speech. In particular, this shifting to more difficult tasks has characterized DARPA funding of speech recognition since the 1. For example, progress was made on speaker independence first by training on a larger variety of speakers and then later by doing explicit speaker adaptation during decoding. Further reductions in word error rate came as researchers shifted acoustic models to be discriminative instead of using maximum likelihood models. By this point, the vocabulary of the typical commercial speech recognition system was larger than the average human vocabulary. The Sphinx- II system was the first to do speaker- independent, large vocabulary, continuous speech recognition and it had the best performance in DARPA's 1. Huang went on to found the speech recognition group at Microsoft in 1. Raj Reddy's student Kai- Fu Lee joined Apple where, in 1. Apple computer known as Casper. In 2. 00. 0, Lernout & Hauspie acquired Dragon Systems and was an industry leader until an accounting scandal brought an end to the company in 2. The L& H speech technology was bought by Scan. Soft which became Nuance in 2. Apple originally licensed software from Nuance to provide speech recognition capability to its digital assistant Siri. Four teams participated in the EARS program: IBM, a team led by BBN with LIMSI and Univ. The GALE program focused on Arabic and Mandarin broadcast news speech. Google's first effort at speech recognition came in 2. Nuance. The recordings from GOOG- 4. Google improve their recognition systems. Google voice search is now supported in over 3. In the United States, the National Security Agency has made use of a type of speech recognition for keyword spotting since at least 2. Recordings can be indexed and analysts can run queries over the database to find conversations of interest. Some government research programs focused on intelligence applications of speech recognition, e. DARPA's EARS's program and IARPA's Babel program. In the early 2. 00. Hidden Markov Models combined with feedforward artificial neural networks. Around 2. 00. 7, LSTM trained by Connectionist Temporal Classification (CTC). Researchers have begun to use deep learning techniques for language modeling as well. In the long history of speech recognition, both shallow form and deep form (e. Most speech recognition researchers who understood such barriers hence subsequently moved away from neural nets to pursue generative modeling approaches until the recent resurgence of deep learning starting around 2. Hinton et al. Hidden Markov models (HMMs) are widely used in many systems. Language modeling is also used in many other natural language processing applications such as document classification or statistical machine translation. Hidden Markov models. These are statistical models that output a sequence of symbols or quantities. HMMs are used in speech recognition because a speech signal can be viewed as a piecewise stationary signal or a short- time stationary signal. In a short time- scale (e. Speech can be thought of as a Markov model for many stochastic purposes. Another reason why HMMs are popular is because they can be trained automatically and are simple and computationally feasible to use. In speech recognition, the hidden Markov model would output a sequence of n- dimensional real- valued vectors (with n being a small integer, such as 1. The vectors would consist of cepstral coefficients, which are obtained by taking a Fourier transform of a short time window of speech and decorrelating the spectrum using a cosine transform, then taking the first (most significant) coefficients. The hidden Markov model will tend to have in each state a statistical distribution that is a mixture of diagonal covariance Gaussians, which will give a likelihood for each observed vector. Each word, or (for more general speech recognition systems), each phoneme, will have a different output distribution; a hidden Markov model for a sequence of words or phonemes is made by concatenating the individual trained hidden Markov models for the separate words and phonemes. Described above are the core elements of the most common, HMM- based approach to speech recognition. Modern speech recognition systems use various combinations of a number of standard techniques in order to improve results over the basic approach described above. A typical large- vocabulary system would need context dependency for the phonemes (so phonemes with different left and right context have different realizations as HMM states); it would use cepstral normalization to normalize for different speaker and recording conditions; for further speaker normalization it might use vocal tract length normalization (VTLN) for male- female normalization and maximum likelihood linear regression (MLLR) for more general speaker adaptation. The features would have so- called delta and delta- delta coefficients to capture speech dynamics and in addition might use heteroscedastic linear discriminant analysis (HLDA); or might skip the delta and delta- delta coefficients and use splicing and an LDA- based projection followed perhaps by heteroscedastic linear discriminant analysis or a global semi- tied co variance transform (also known as maximum likelihood linear transform, or MLLT). Many systems use so- called discriminative training techniques that dispense with a purely statistical approach to HMM parameter estimation and instead optimize some classification- related measure of the training data. Examples are maximum mutual information (MMI), minimum classification error (MCE) and minimum phone error (MPE). Decoding of the speech (the term for what happens when the system is presented with a new utterance and must compute the most likely source sentence) would probably use the Viterbi algorithm to find the best path, and here there is a choice between dynamically creating a combination hidden Markov model, which includes both the acoustic and language model information, and combining it statically beforehand (the finite state transducer, or FST, approach). A possible improvement to decoding is to keep a set of good candidates instead of just keeping the best candidate, and to use a better scoring function (re scoring) to rate these good candidates so that we may pick the best one according to this refined score. The set of candidates can be kept either as a list (the N- best list approach) or as a subset of the models (a lattice). Re scoring is usually done by trying to minimize the Bayes risk. The loss function is usually the Levenshtein distance, though it can be different distances for specific tasks; the set of possible transcriptions is, of course, pruned to maintain tractability.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

November 2017

Categories |

RSS Feed

RSS Feed